In my last post (“What is Argo?”), I outlined why enterprise localization automation can feel like a constant uphill battle.

Large enterprises commonly run multiple content management systems (CMSs), each with unique workflows and requirements. As a result, out-of-the-box integrations between CMSs and translation management systems (TMSs) are often insufficient or brittle. To make matters worse, some CMSs aren’t designed with localization in mind, while others are homegrown. Meanwhile, most stakeholders don’t work in the TMS, so there’s no seamless way to coordinate their work. Consequently, localization teams end up stitching workflows together with spreadsheets, scripts, and general-purpose automation tools that weren’t built for localization. These approaches rarely scale.

Some enterprises try to solve this by building localization platforms in-house. A few have the resources and capabilities, but most quickly run into a sobering reality: building one is hard, and maintaining it is even harder when the foundation isn’t solid. Technical debt tends to accumulate when platform builders lack deep localization expertise or aren’t resourced for the long term. Over time, course correction becomes much harder and more expensive, and localization teams struggle to keep up with the business.

Some readers told me they’ve seen this firsthand and asked how we solve these problems at Spartan. This post explains the principles we follow when building enterprise localization platforms, with Argo as the foundation.

Why platforms fail: It’s rarely the technology

In my 25 years of deploying enterprise localization solutions, technology is rarely the main reason platforms fail.

More often, the major obstacles are:

- Limited experience building enterprise localization platforms

- Poorly defined or under-scoped requirements at the outset

That’s why the first step in building an enterprise localization platform is putting the right team in place—on both the customer side and the technology provider side.

Without the right team, it becomes a constant struggle. No amount of technology will succeed at the enterprise level unless the builders have sufficient experience, a deep understanding of the requirements, and the right mindset.

The engineering team’s job is to make users’ lives easier and deliver value to the organization. The system is a means to that end, not an end in itself. Ideally, you want a team that shares this mentality.

We usually start with a discovery process to understand the customer’s unique requirements, constraints, and operating model.

Skip that step, and what you deploy likely won’t deliver the results you’re looking for.

Furthermore, requirements and specifications will keep evolving throughout implementation and throughout the platform’s lifetime. That’s why strong project and product management are essential, particularly during the initial build-out phase.

Operational capabilities

In our experience, these are some of the core operational capabilities an enterprise localization platform should have:

- Modular by design (isolate outages, add or swap components without disruption)

- Request governance (enforce clear rules, prevent problems up front)

- Adaptable orchestration (edit mid-flight, handle exceptions, support human intervention)

- System of record (maintain a full audit trail in one place)

- Cross-team collaboration (coordinate quickly to resolve issues)

- End-to-end visibility (track operational metrics across the ecosystem, not just in the TMS)

With that framing in mind, here are six ways Argo is designed to deliver these capabilities.

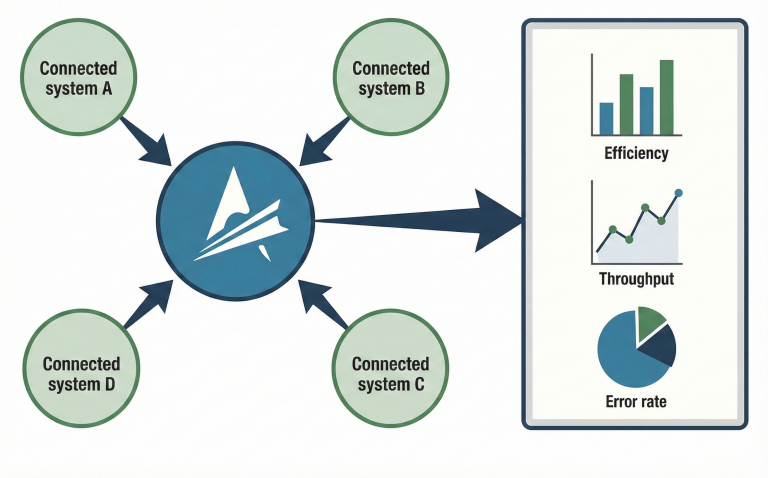

#1 – Hub-and-spoke, plug-and-play architecture

Principle: Modular by design (isolate outages, add or swap components without disruption)

Enterprise customers want options. They want to swap or migrate components without re-architecting everything. They want to pilot new tools safely, run legacy and new systems in parallel for A/B testing, and contain outages so they don’t cascade across the platform. And they want a platform that can support a broad, evolving set of capabilities.

A hub-and-spoke, plug-and-play architecture delivers exactly that.

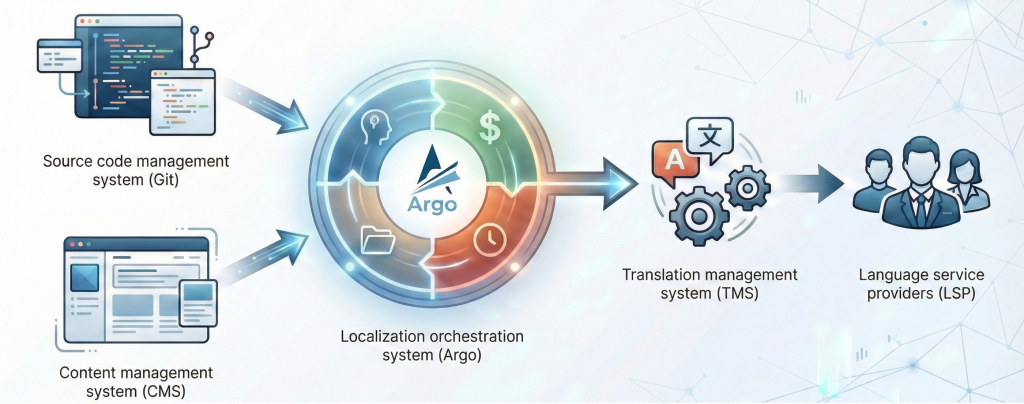

Argo is an API-first, microservices-based localization platform built around a hub-and-spoke architecture, with robust, highly configurable integrations. It centralizes control and data in a single source of truth while isolating systems, so changes or outages in one tool don’t impact the others.

Its plug-and-play design makes it easier to swap or migrate components, reduces integration complexity, and supports reliable scaling as localization needs grow.

Additionally, specialized “Agents” can be developed and integrated with Argo, giving customers an even broader range of capabilities.

#2 – All content, one request hub

Principle: Request governance (enforce clear rules, prevent problems up front)

CMS plugins that trigger translation requests work well in many situations.

But plugin capabilities are still constrained by the CMS environment.

Initiating translation requests outside the CMS unlocks more possibilities and gives you greater control over the process.

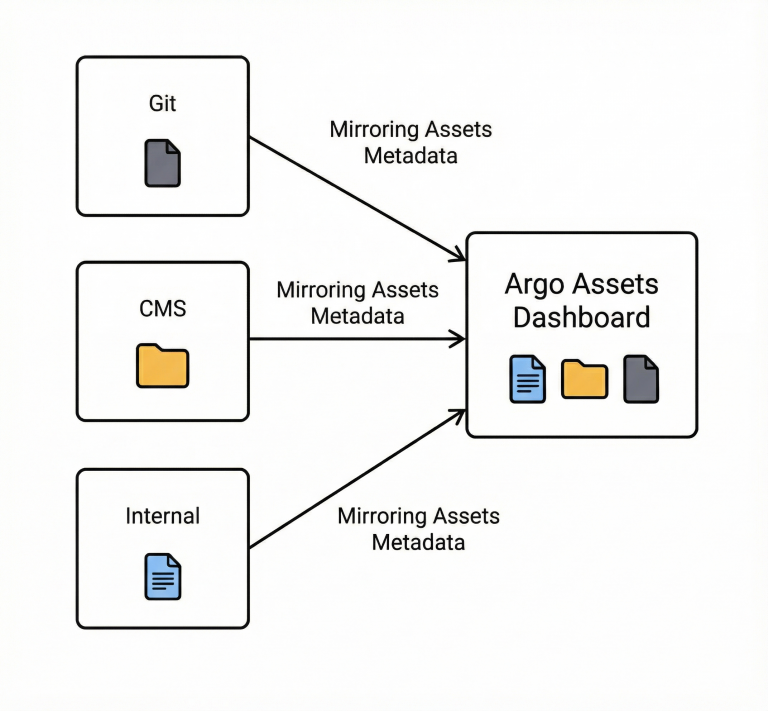

The Assets Dashboard in Argo is a centralized workspace that lets users and automation tools browse and search content across one or more content platforms, then select assets and create translation requests directly in Argo.

It includes safeguards that prevent users from requesting assets that are already part of an active request, assets that shouldn’t be translated yet (for example, draft content), or assets that haven’t changed since they were last translated.

It also shows which requests are associated with each asset, improving visibility and governance.

Overall, the Assets Dashboard creates a unified request-creation experience across content platforms, reduces CMS-side customization and dependence on CMS operators, and lowers operational risk by preventing duplicate or invalid requests.

#3 – Smart, adaptive orchestration across systems

Principle: Adaptable orchestration (edit mid-flight, handle exceptions, support human intervention)

In many systems, once a job is in motion, the path is effectively locked. When something unexpected happens, teams end up filing tickets, applying workarounds, or restarting the process.

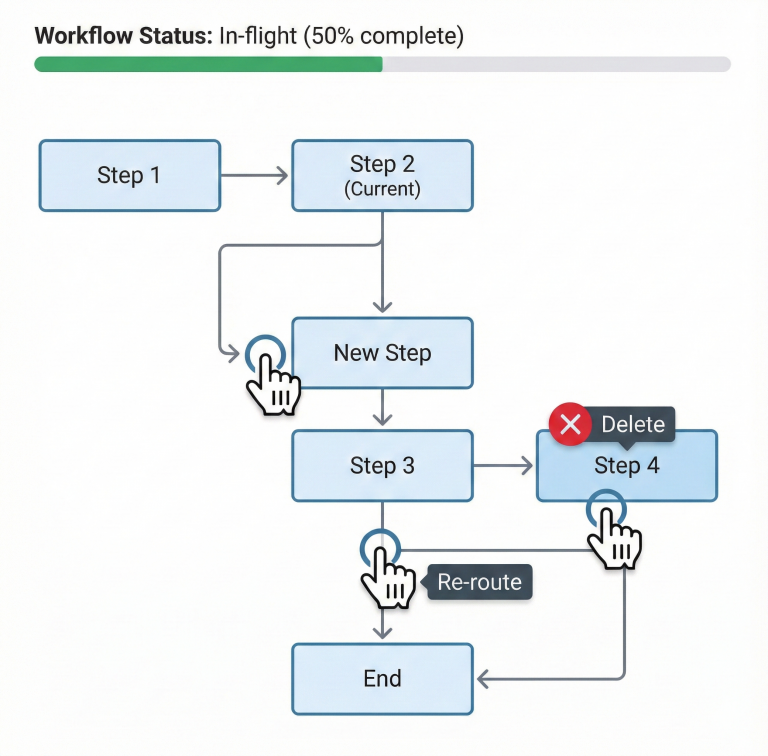

Argo sits above the connected tools as an orchestration layer. It coordinates exports, handoffs, and imports across CMSs (including Git-based systems), TMSs, and service providers, while keeping state, decisions, and context in one place.

In practice, this orchestration is executed through Argo workflows. A typical workflow exports content packages from the source system (for example, translatable assets, non-translatable assets, reference files, and preview packages), dispatches assets to the appropriate TMS or service provider, retrieves translated deliverables, and imports finalized content back into the source system.

Because the orchestration is stateful and policy-driven, it can also route work dynamically. For example, it can automatically select the right TMS or service provider based on CMS metadata, or route non-translatable assets for special handling.

Most importantly, orchestration remains editable mid-flight. Workflows are configurable, composable, and can be adjusted midstream. This enables practical changes such as excluding problematic files, sending late-added content as a new batch, or inserting remediation steps (e.g., re-retrieving assets from a TMS and resubmitting them for import into the CMS) without restarting the request.

#4 – Empowering project managers, all in one place

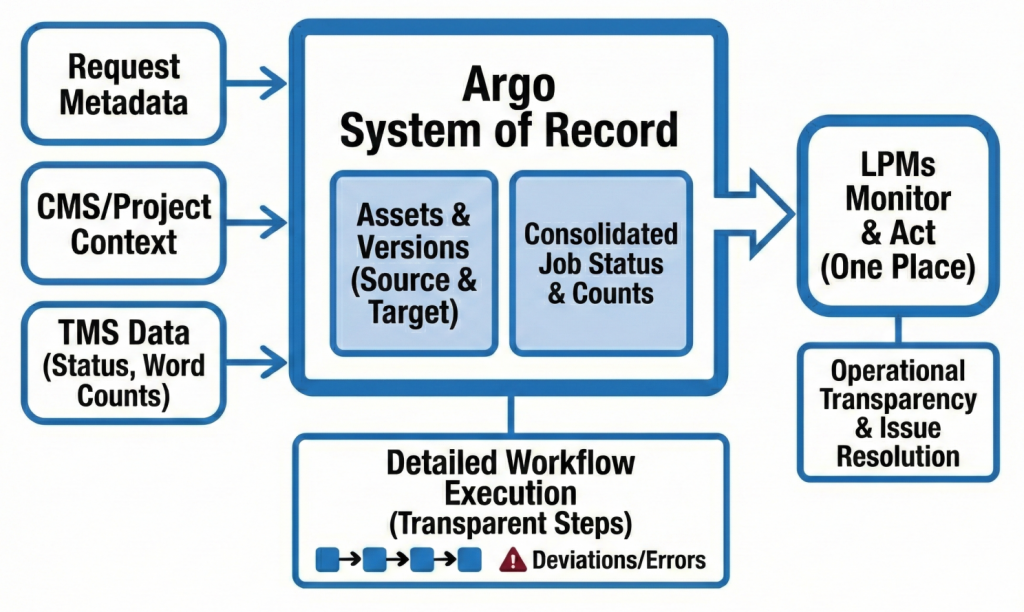

Principle: System of record (maintain a full audit trail in one place)

Argo is designed to be the cockpit for project managers.

It maintains a complete, auditable system of record for each request by consolidating request metadata; CMS, project, and asset context; a unified view of project and job status (including word counts) pulled from connected TMSs; and all versions of the associated source and target assets.

This lets project managers monitor progress without living in multiple tools.

For operational transparency, Argo also provides detailed visibility into each step of workflow execution, so it doesn’t become a “black box.”

That helps project managers pinpoint where and why a request is deviating and address issues such as outages or network errors.

#5 – Collaboration that keeps localization moving

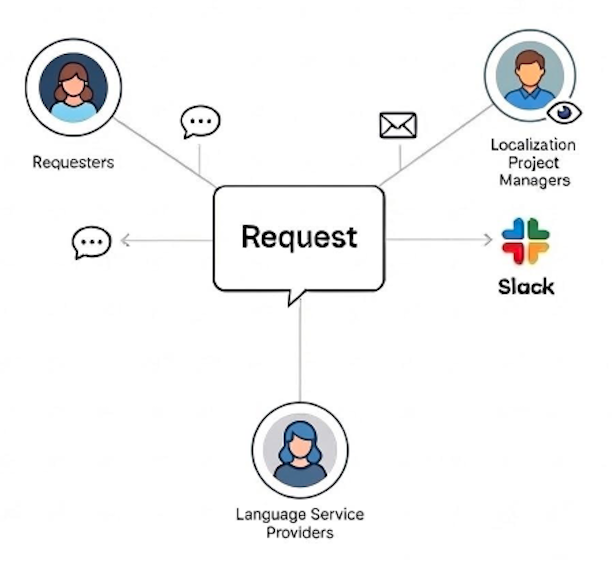

Principle: Cross-team collaboration (coordinate quickly to resolve issues)

Enterprise localization has many use cases: some workflows are fully automated and support lights-out operations, while others require collaboration across many participants.

Argo’s collaboration capabilities keep all stakeholders, including requesters, project managers, and service providers, aligned throughout the lifecycle of a request.

The Comments feature allows users to discuss work directly within a request and notifies participants via email, much like a ticketing system.

To enhance visibility, the Watchers feature allows users to follow specific requests or loop in teammates (e.g., for coverage while out of the office). Users can also filter their dashboard to find watched or commented-on requests.

For real-time updates, Argo also integrates directly with Slack.

#6 – Ops data & KPI insights

Principle: End-to-end visibility (track operational metrics across the ecosystem, not just in the TMS)

As the hub of the localization platform, Argo centralizes operational data and key localization KPIs, such as OTD (on-time delivery) and TAT (turnaround time), across connected systems. This means users don’t have to chase information across the disparate tools Argo integrates with. Argo can be tailored to pull curated data from each system and present it in one place, making reporting significantly easier for project managers.

Argo can retrieve word counts and translated word counts from connected TMSs by default, and can optionally pull scoping and TM leverage data (e.g., fuzzy-match metrics) when TMS APIs support it.

Users can build reports in the Argo UI, export data (e.g., as CSV), and integrate Argo data into external analytics platforms such as Tableau via exports or APIs.

Are you considering building an enterprise localization platform?

Building one isn’t trivial. But once it’s in place, you can spend less time dealing with operational issues and more time driving business impact.

If you’re exploring how to build or rebuild a localization platform, we’d be happy to share what we’ve learned. Argo has served as the foundational tool behind our customers’ enterprise localization platforms since 2018, and you can read their testimonials on our website.

Reach out to us at hello@spartansoftwareinc.com or connect with me on LinkedIn if you’d like to learn more about Spartan and Argo.

Thank you for reading. I hope you found this article helpful.

Yan